The system of near-universal surveillance had been set up not just without our consent, but in a way that deliberately hid every aspect of its programs from our knowledge. At every step, the changing procedures and their consequences were kept from everyone, including most lawmakers.

— Edward Snowden, Permanent Record

Where exactly is the maximum tolerable level of surveillance, beyond which it becomes oppressive? That happens when surveillance interferes with the functioning of democracy: when whistleblowers (such as Snowden) are likely to be caught.

— Richard Stallman, How Much Surveillance Can Democracy Withstand?

Do not fear to be eccentric in opinion, for every opinion now accepted was once eccentric.

– Bertrand Russell, A Liberal Decalogue

A policeman's job is only easy in a police state.

– Orson Welles, Touch of Evil

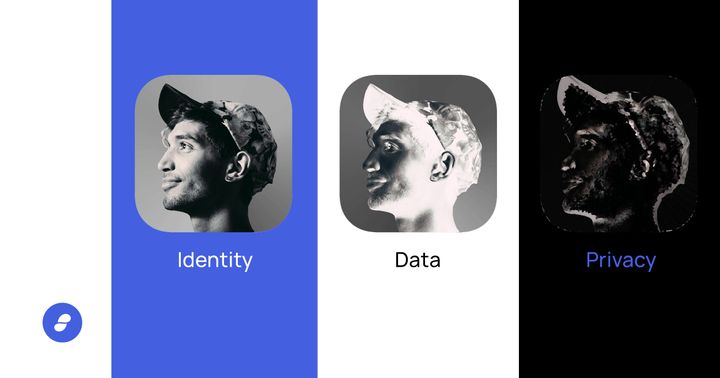

Contrary to assertions that people don’t care about privacy in the digital age, the vast majority of Americans believe that they should have more control over their data.

According to a 2015 survey by Pew Research, 93% of Americans believe that being in control of who can get information about them is important. At the same time, a similarly large majority — 90% — believe that controlling what information is collected about them is important.

However these views are in stark contrast to how the FBI and NSA currently operate, even post-Snowden.

At a House Oversight Committee hearing in June of last year, an FBI witness revealed that the agency can match or request a match of an American’s face against at least 640 million images of adults living in the U.S. This includes driver’s license photos from 21 states, including states that do not have laws explicitly allowing them to be used in this way.

At the same hearing we also learned that the FBI believes it can use face recognition on individuals without a warrant or probable cause.

According to the ACLU:

Under FBI guidelines, agents can open an assessment without any fact-based suspicion whatsoever. Even preliminary investigations may be opened only in cases where there is mere “information or allegation” of wrongdoing, which the FBI interprets to cover mere speculation that a crime may be committed in the future.

How do we explain this inconsistency between what the public wants and what the authorities are doing?

It comes down to one word — framing — or the metaphors and moral narrative associated with an idea.

In the words of Steve Rathje:

We often metaphorically think of the mind as a machine, saying that it is “wired” to behave in certain ways. But, the mind is not simply a machine, engineered to behave entirely rationally. Instead, like a work of art, the mind thrives on metaphor, narrative, and emotion — which can sometimes overtake our rationality.

Put another way, the way we frame something determines how we think about it. And privacy is, of course, no exception. Whether we realise it or not, privacy is being framed by authority to influence our thoughts in a particular direction, even if we instinctively know there’s something not quite right about it.

To quote directly from Phillipp Rogaway’s seminal paper on the moral dimension of cryptography:

I think people know at an instinctual level that a life in which our thoughts, discourse, and interactions are subjected to constant algorithmic or human monitoring is no life at all. We are sprinting towards a world that we know, even without rational thought, is not a place where man belongs.

Rogaway goes on to outline what he calls the brilliant but misleading law-enforcement framing of mass surveillance*. This persuasive and well-crafted framing is often espoused by intelligence communities around the world to justify their actions. He describes it like this:

- Privacy is a personal good. It’s about your desire to control personal information about you.

- Security, on the other hand, is a collective good. It’s about living in a safe and secure world.

- Privacy and security are inherently in conflict. As you strengthen one, you weaken the other. We need to find the right balance.

- Modern communications technology has destroyed the former balance. It’s been a boon to privacy, and a blow to security. Encryption is especially threatening. Our laws just haven’t kept up.

- Because of this, bad guys may win. The bad guys are terrorists, murderers, child pornographers, drug traffickers, and money launderers. The technology that we good guys use — the bad guys use it too, to escape detection.

- At this point, we run the risk of Going Dark. Warrants will be issued, but, due to encryption, they’ll be meaningless. We’re becoming a country of unopenable closets. Default encryption may make a good marketing pitch, but it’s reckless design. It will lead us to a very dark place.

Reading it evokes a sense of fear. A fear of crime, a fear of losing our parents’ protections, even a fear of the dark... This is no accident.

So why is it wrong? The crux of the issue here hinges on whether we can consider privacy to be a personal rather than a collective good, and whether it is correct to regard privacy and security as conflicting values.

Let’s address these separately.

Is privacy a personal or a collective good?

While it’s self-evident that privacy can be a personal good, It’s not so obvious that it can be a collective good too. How can it be a collective good?

Privacy is a collective good if the limitations placed on our privacy result in a world in which there is less space for personal exploration, and less space to challenge social norms.

I'm on the Tianjin to Beijing train and the automated announcement just warned us that breaking train rules will hurt our personal credit scores!— Emily Rauhala (@emilyrauhala) January 3, 2018

Since social progress depends on the ability of individuals to challenge authority and the status quo, in a world in which law enforcement is watching over every little thing we do, progress inevitably slows.

If history teaches us anything, it's that almost every societal value we take as obviously true in liberal democracies today -- the right to vote, the equal rights of men and women, the right to a fair and public hearing, the right not to be held in slavery or servitude, the right to freedom of opinion and expression ... -- was once deemed eccentric and a threat to the powers that be.

Put another way, if the space in which we can express eccentric opinions or challenge authority gets smaller, then effective dissent becomes harder. And — to paraphrase Rogaway again — without dissent, social progress is unlikely.

Since social progress is, by definition, a collective good, it’s clear that things aren’t as simple as the law-enforcement framing would suggest; privacy is both a personal and a collective good.

Are privacy and security inherently in conflict?

Another way of answering this question is to flip it around: Can lack of privacy make us less secure?

We don’t have to look very hard to see that the answer is yes, it can.

To take just one example, a 2019 Citizens Lab report showed that at least 100 journalists, human rights activists and political dissidents had their smartphones attacked by spyware that exploited a vulnerability in WhatsApp (this spyware was sold only to law-enforcement and intelligence agencies -- including at least 20 EU countries).

This is far from an isolated case. We see something like this in the news almost every day. People’s lives are regularly made less secure because of a lack of privacy, especially when they are considered a threat to state power.

It should be obvious that a world in which journalists and activists cannot communicate without law-enforcement watching over, is a world which is at the same time less secure for the individual, and worse for society (over the long-term).

Summary

In contrast to what law enforcement would have you believe, we've seen that privacy and security are not inherently in conflict. In particular, reducing privacy reduces the security of journalists, activists, minority groups, and all those who dare to reveal things the state would rather keep secret (in other words, those who dare to speak truth to power).

We've also seen how privacy is both a personal and a collective good -- without privacy, dissent is hard, and without dissent, social progress is unlikely.

There is also an important link between the two. The individuals who dare to speak truth to power are the same individuals who by-and-large drive social progress forward.

Equipped with these insights we are able to see through the law-enforcement framing. The argument is nuanced and complex though. The challenge going forward lies in coming up with the right metaphors and narratives to help the public see through it too.**

Notes

**The obvious looming metaphorical battleground today is the fight for the future of end-to-end encryption. To quote from a recent EFF article:

The last few months have seen a steady stream of proposals, encouraged by the advocacy of the FBI and Department of Justice, to provide “lawful access” to end-to-end encrypted services in the United States. Now lobbying has moved from the U.S., where Congress has been largely paralyzed by the nation’s polarization problems, to the European Union—where advocates for anti-encryption laws hope to have a smoother ride. A series of leaked documents from the EU’s highest institutions show a blueprint for how they intend to make that happen, with the apparent intention of presenting anti-encryption law to the European Parliament within the next year.

While on first glance, it may seem like the law-enforcement messaging has evolved to appear more diplomatic, the core of the narrative -- that we must choose either safety or privacy -- remains the same:

The WePROTECT Global Alliance, NCMEC and a coalition of more than 100 child protection organisations and experts from around the world have all called for action to ensure that measures to increase privacy – including end-to-end encryption – should not come at the expense of children’s safety

If we are to stand a chance of winning this battle, we need to come up with narratives that are equally simple, but closer to the truth.

*Rogaway goes on to contrast it with the — almost orthogonal — cypherpunk / surveillance-studies framing:

- Surveillance is an instrument of power. It is part of an apparatus of control. Power doesn’t need to be-in-your-face to be effective: subtle, psychological, nearly invisible methods can actually be more effective.

- While surveillance is nothing new, technological changes have given governments and corporations an unprecedented capacity to monitor everyone’s communication and movement. Surveilling everyone has become cheaper than figuring out whom to surveil, and the marginal cost is now tiny. The internet, once seen by many as a tool for emancipation, is being transformed into the most dangerous facilitator for totalitarianism ever seen.

- Governmental surveillance is strongly linked to cyberwar. Security vulnerabilities that enable one enable the other. And, at least in the USA, the same individuals and agencies handle both jobs. Surveillance is also strongly linked to conventional warfare. As Gen. Michael Hayden has explained, “we kill people based on metadata.” Surveillance and assassination by drones are one technological ecosystem.

- The law-enforcement narrative is wrong to position privacy as an individual good when it is, just as much, a social good. It is equally wrong to regard privacy and security as conflicting values, as privacy enhances security as often as it rubs against it.

- Mass surveillance will tend to produce uniform, compliant, and shallow people. It will thwart or reverse social progress. In a world of ubiquitous monitoring, there is no space for personal exploration, and no space to challenge social norms either. Living in fear, there is no genuine freedom.

- But creeping surveillance is hard to stop, because of interlocking corporate and governmental interests. But cryptography offers at least some hope. With it, one might carve out a space free of power’s reach.

Reading this evokes a sense of injustice, sense that our liberty has been infringed upon, even taken away, without us realising. Digging into this narrative is the subject of another essay :)

Thanks to Jessica Steiner, Felix Saint-Leger, Tom Saint-Leger, and Barnabé Monnot for reading drafts of this.